For decades, organizations have paid lip service to what truly drives people. AI is now removing every excuse — and presenting perhaps the greatest opportunity in the history of corporate culture to build organizations where people actually thrive.

The executives who have read Daniel Pink’s Drive: The Surprising Truth About What Motivates Us are often more dangerous than those who haven’t. They believe they’ve solved motivation. They’ve introduced purpose statements, carved out 20% time, gamified skill-development with digital badges, and then they wonder why engagement scores haven’t moved in a decade. Pink didn’t write a checklist. He described a psychological architecture. And most organizations have treated his ideas like a renovation: new paint on crumbling walls.

Here is what those leaders are about to discover: AI is arriving not as a threat to that architecture, but as its most powerful stress test — and its most extraordinary opportunity. Organizations that partner deliberately with AI will, for the first time, have the operational capacity to build what Pink envisioned. The ones that don’t will find AI accelerates exactly what was already broken.

The Performance of Motivation

Autonomy, as Pink defines it, is the urge to direct your own work. In most corporate implementations, it becomes input on the roadmap and flexible Fridays — the illusion of agency inside a pre-determined box. Mastery becomes a learning management system with mandatory modules. Purpose gets compressed onto a laminated poster in the break room.

The failure is structural. Pink’s framework describes what humans need; it says nothing about how organizations must be redesigned to provide it. Real autonomy requires genuine tolerance for failure. Real mastery requires time not instrumentalized toward quarterly targets. Real purpose requires honesty about what the work actually does in the world. Those conditions have always been expensive to create. AI is about to change that calculus entirely.

Most companies are not failing at motivation because they ignored Drive. They are failing because they read it as a communications strategy rather than an organizational design problem. AI won’t fix that misreading — but leaders who grasp the partnership will find themselves holding tools that can finally close the gap.

The Partnership That Changes Everything

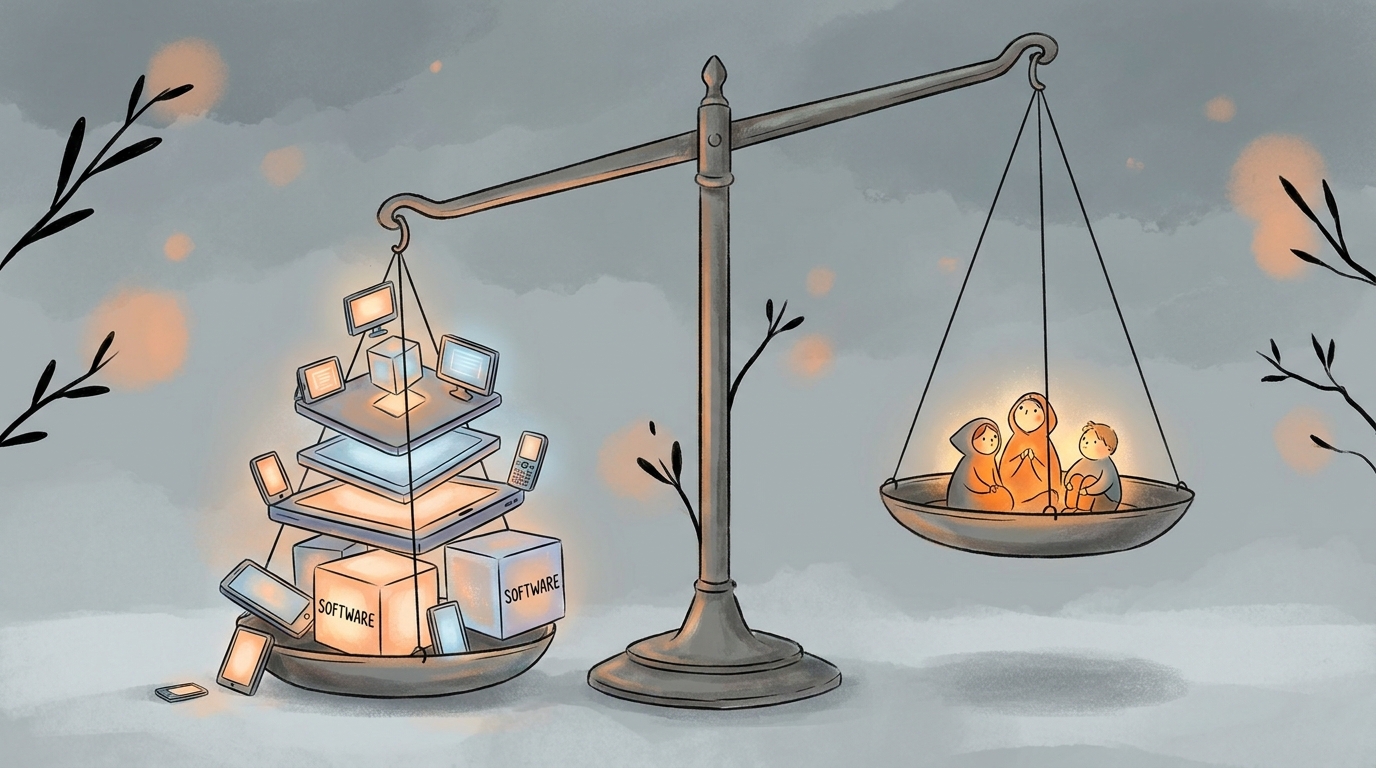

When AI absorbs the mechanical, the repetitive, and the cognitive-but-routine, it doesn’t diminish human work. It creates the conditions under which Pink’s framework can finally breathe. For the first time in the history of industrial organization, we have a technological partner capable of handling the tasks that were always getting in the way of what makes people extraordinary.

Think about what AI actually displaces: the low-autonomy work — compliance checking, report generation, first-draft assembly — that occupied hours and crushed the space for genuine direction. The mastery-blocking overhead that kept people too busy to think deeply about their craft. The transactional volume that made it impossible to connect individual effort to meaningful outcome. Strip those away, and something remarkable becomes possible: an organization where autonomy, mastery, and purpose are not aspirational values but structural realities.

“AI doesn’t just free up time. Partnered deliberately, it frees up the conditions under which human beings do their best work.”

Consider a financial services firm that deploys AI to absorb the mechanical modeling — data cleaning, scenario generation, variance checking. What remains for the analyst? Judgment. Client context. The nuanced read of what a number means for a specific business in a specific moment. The analyst who once spent 60% of their time on mechanics and 40% on insight can now invert that ratio. The work becomes, for the first time, genuinely worthy of them.

This is not utopian thinking. It is operational design. But it requires leaders to approach AI as a partner in motivational architecture — not a cost-reduction tool.

The Urgency You Are Underestimating

Organizations that deploy AI purely as an efficiency instrument — automating output, compressing headcount, optimizing throughput — will create environments of spectacular demotivation. Faster processes, hollower people. Their engagement scores will collapse in ways that baffle them, because they will have optimized for everything except the conditions that make people want to come to work.

The organizations that win this decade are moving right now to ask a different question: not “what can AI do?” but “what does AI make possible for our people?” That shift in framing is the difference between using a powerful tool carelessly and wielding it with strategic intent. The gap between those two paths is widening every quarter — and it is not closing.

What Pink’s Framework Still Needs

Two things missing from Drive become critical in a human-AI partnership. First, legibility: the capacity to understand what you are doing and why it matters, even when AI handles much of the execution. As AI absorbs the operational layer, people need a deliberate view of the decision layer — or motivation collapses into purposeless oversight. Second, authorship: the sense that you are genuinely shaping something, not presiding over it. Autonomy in a world of AI copilots can easily become the autonomy to approve recommendations you don’t understand. That is not self-direction. That is a more sophisticated cage — and it is the default outcome if leaders are not intentional.

These are design requirements. Leaders who treat them as such will build organizations where the human-AI partnership produces something genuinely new: work that is more autonomous, more mastery-rich, and more purposeful than anything the pre-AI organization could offer.

A Motivational Architecture for the Partnership Era

PRINCIPLES FOR HUMAN–AI MOTIVATIONAL ARCHITECTURE

1 Deploy AI to clear the path to mastery, not around it. Use AI to eliminate the mechanical work that blocks deep practice — not the challenging work that is the point. Ask what the human should become more expert at, then design the AI’s role around that answer.

2 Redesign autonomy around decision rights, not task choice. Autonomy must mean authority over consequential choices — what to pursue, what to reject, where to draw ethical lines. If people are only deciding how to prompt a system, they don’t have autonomy. They have the appearance of it.

3 Anchor purpose before deploying AI, not after. Organizations without a clear account of their purpose will find AI accelerates their strategic confusion. Purpose is the prerequisite for intelligent decisions about what to automate — and the only force capable of making the partnership feel meaningful rather than merely efficient.

4 Build legibility and authorship into the system design. People need to understand what AI is doing around them and feel they are genuinely directing it — not the reverse. This is not a training problem. It is an architectural one.

5 Measure what the partnership is doing to motivation, not just to output. Standard engagement surveys won’t detect the erosion of mastery or the inflation of hollow autonomy. If the only metrics you track are efficiency gains, you are flying blind on the dimension that matters most.

Pink argued that the carrot-and-stick model was built for an earlier era — one that demanded compliance, not creativity. He was right. For fifteen years, most organizations responded by replacing the stick with a lanyard and a snack bar. AI ends that era of comfortable inaction. It places in leaders’ hands the leverage to finally build what Pink described: organizations where people are genuinely self-directed, genuinely growing, and genuinely connected to work that matters.

The default — AI deployed without motivational intention — produces something darker: organizations that are faster, cheaper, and profoundly empty. The difference between that future and the one worth building is a leadership choice available right now. The organizations making it deliberately are already pulling ahead.

The question is whether you will use that reshaping to finally build what Pink described, and discover that the partnership between human purpose and AI capability produces something neither could achieve alone. That future is not inevitable. But for the first time, it is buildable. The organizations making that choice deliberately are already showing the rest of us what it looks like.

Leave a Reply