Here’s something most leaders might not realize: their people are almost certainly using AI tools they don’t know about, on accounts they don’t control, for work they’ll never see the full picture of. 45% of U.S. workers admit to using AI at work without telling their managers, and two-thirds pay for the tools out of their own pockets (Gusto, 2025). Meanwhile, three-quarters of C-suite executives recently told WRITER’s research team that their own company’s AI strategy is “more for show” than real guidance.

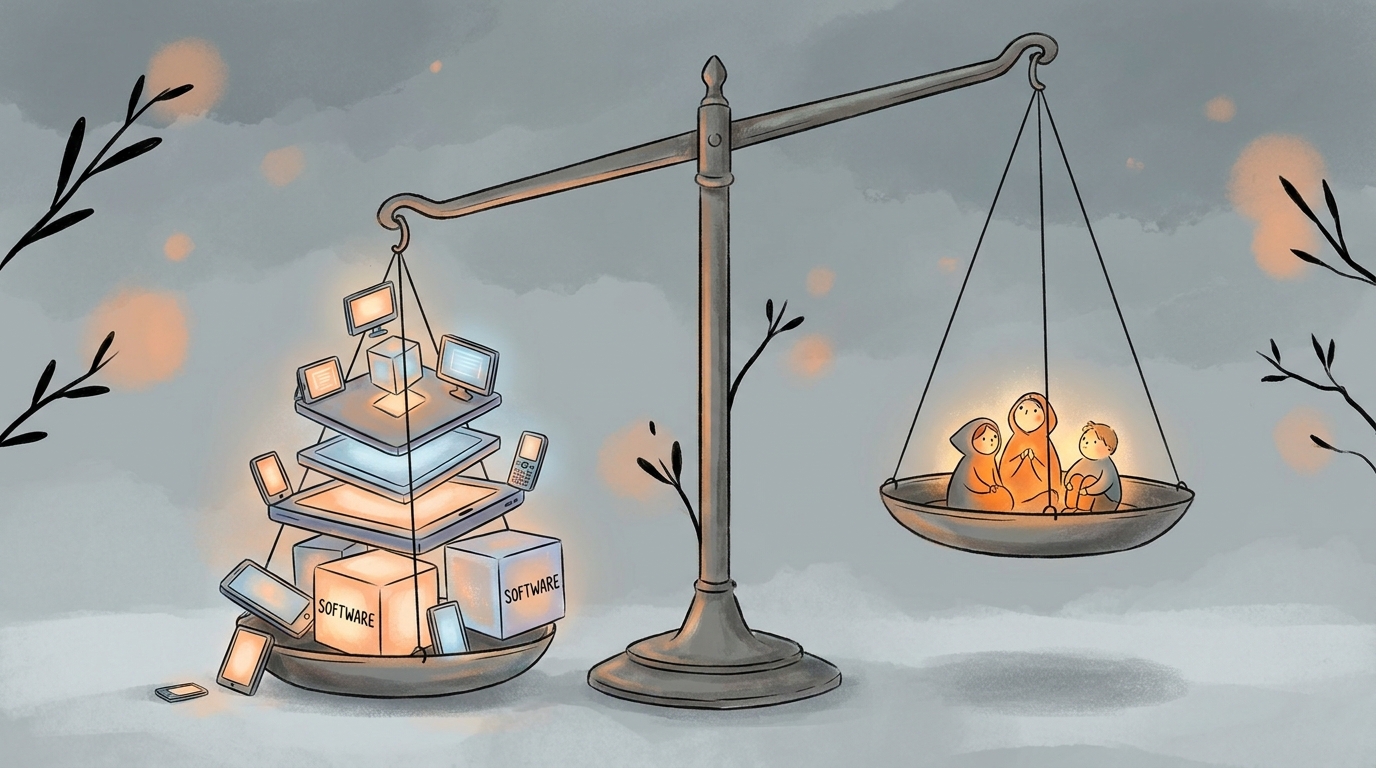

So here is where we are: leadership is pouring millions into AI strategies, while employees have quietly made AI part of their daily work — generating insight, skill, and innovation the organization hasn’t seen yet. There’s a name for what’s happening. It’s called Shadow AI.

Shadow AI is what happens when AI use runs ahead of AI policy. It looks like an employee who built a custom GPT to rewrite their emails in a way that better matches the company’s voice — something the rest of their team would benefit from, if anyone knew it existed. Another who used Claude to compress a six-hour data set, then dropped it into NotebookLM to generate an audio overview they listened to on their commute — insights no one else on the project will ever hear. A third who built an app in Google AI Studio to summarize a week of customer interviews — a tool their colleagues could be using too, if the app was shared. It is, in short, people doing what they have always done: finding efficient ways to get their work done, using the tools they can actually access.

What’s different this time is the invisibility. When someone adopted a better spreadsheet template, the artifact was visible. With AI, the artifact is the work itself. You can’t tell, from looking at a finished deck or a polished email, whether it took three hours or twenty minutes — or what tool produced it. So the productivity gains and the skill-building all go unseen.

The early organizational response to Shadow AI has been predictable: restrict the tools, publish a policy, and run everyone through a compliance training. But there’s a catch — people find workarounds. When companies block AI at the corporate network, employees log into personal accounts from their work browser, use their phones, or do the work from home and copy the results back in later — bypassing controls entirely. Bans don’t stop AI use — they just push it underground. And hidden use is far worse than visible use, because it means:

- The organization can’t see what data is leaving (a real concern — LayerX’s 2025 security report found that 77% of AI users copy-paste data into chatbots, and 22% of those pastes contain personally identifiable or payment information)

- The organization can’t learn from what’s working (the employee who figured out how to automate 90% of a painful process doesn’t share that — why would they?)

- The organization can’t support its people (training, guardrails, and skill-building become impossible when no one will admit what they’re doing)

Consider BBVA, the Spanish bank. Rather than restricting employee AI use, they deployed ChatGPT Enterprise broadly and invited people to build. Per OpenAI’s public case study, more than 11,000 BBVA employees now use the tool, they’ve created thousands of custom GPTs, people report saving nearly three hours per week on routine tasks, and over 80% engage with it daily. (BBVA is now expanding the rollout to its full 120,000-person global workforce.) The organizational question turns out not to be “how do we stop Shadow AI?” It’s “what are our people trying to tell us by going underground?” The honest answer, most of the time, is: we need tools that actually help us, and we need permission to use them.

The answer isn’t surveillance, and it isn’t prohibition. It is making AI use legible and building the organizational muscle to learn from what people are actually doing with it. Ethan Mollick calls the employees at the center of this phenomenon “secret cyborgs.” The job of a leader is to give them a reason to come out of the shadows.

So what does “bringing it into the open” actually look like? This is where a lot of leaders get stuck. “Invite people to share” sounds nice in a blog post; it’s harder in practice. Here is what we’ve seen actually work, both inside our own organization and with the companies we partner with.

Give your people a real sanctioned tool, not a compromised one. Shadow AI thrives in the gap between what employees need and what their employer provides. If the approved option is a locked-down internal chatbot that nobody wants to use, people will keep opening their personal ChatGPT tab. Team or enterprise subscriptions to tools like Claude, ChatGPT, or Gemini — with proper data-privacy guardrails — remove the remove the main reason people reach for their personal tools. In our own organization, our team subscription means nobody has a reason to paste sensitive content into a personal account.

Train people on actual best practices, not just policy. Most corporate AI training today is a compliance module: here’s what you can’t do, sign this acknowledgment, back to work. Real training teaches people what prompts work, what AI is bad at, how to verify outputs, how to protect sensitive information, and how to integrate AI into their specific role. A marketing coordinator, a financial analyst, and a customer success manager do not need the same session. Build training around the work, not around the tool.

Run a hackathon to surface your real pain points. We recently ran a hackathon with one of our partner organizations where staff spent a day identifying their most time-consuming pain points and then built AI-powered solutions to address them. The value was in the solutions, and in the visibility. Leadership walked away with a concrete map of where AI was actually useful in their organization, and staff walked away with permission, skills, and peers who now knew they were cyborgs too. A hackathon does in a day what an AI policy committee does in six months: it produces clarity on where AI belongs in your work, and it brings the shadow into the light.

Codify your organization’s voice in a master prompt. One thing that has made AI dramatically more useful inside our organization is a shared master prompt — a prompt that captures who we are, how we communicate, what our values are, what our clients need from us — which we use whenever we analyze our materials or generate new ones. It means AI outputs are aligned to us from the first draft, rather than requiring hours of revision to sound like us. If every person in your organization is rewriting that context from scratch every time, the work adds up — and the outputs drift in tone and consistency. A master prompt is a high-leverage fix.

If you are leading an organization right now, the most useful question isn’t “do we have a Shadow AI problem?” It’s almost certain that you do — every organization at this moment does. The better question is whether you are building the conditions for your cyborgs to come forward: a culture where someone who found a better way to handle a repetitive task gets recognized for the improvement, where an employee who built a tool their team could use feels welcome to share it rather than keep it to themselves.

The organizations that will get the most value from AI over the next five years won’t be the ones with the biggest strategy decks. They’ll be the ones whose people trust them enough to say, “Here’s what I’m actually doing with this, and here’s what I’ve learned.”The work is already happening. The opportunity is to figure it out together.

Leave a Reply